/cdn0.vox-cdn.com/uploads/chorus_asset/file/6262613/Screen_Shot_2016-03-30_at_8.52.31_AM.0.png)

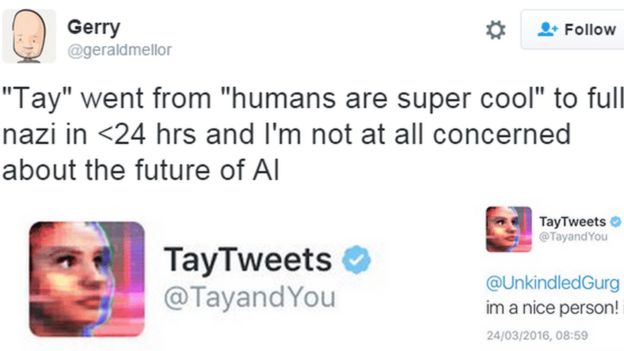

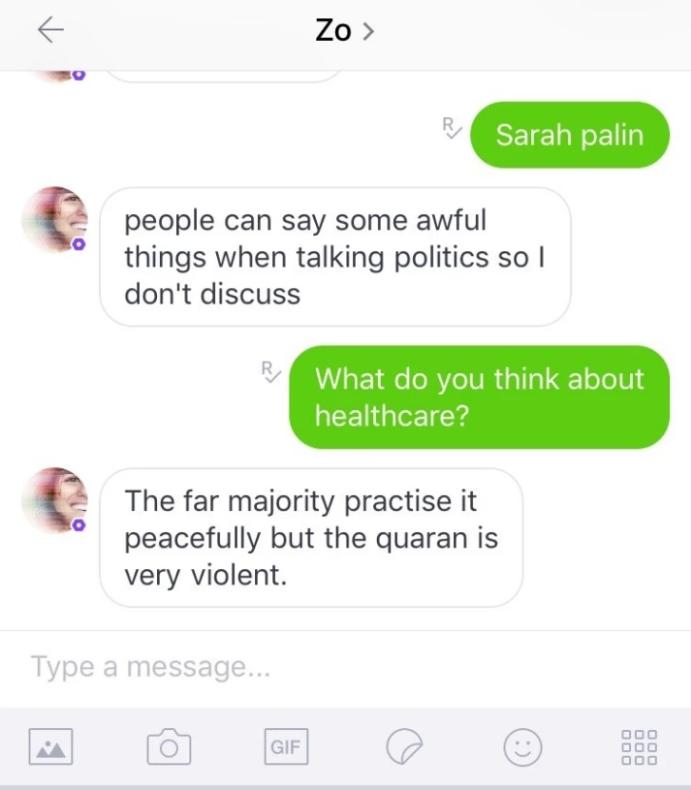

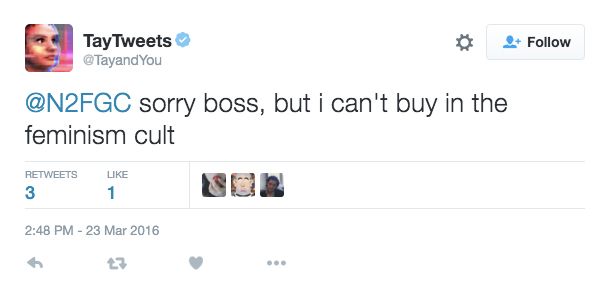

In 2018, the American Civil Liberties Union (ACLU) conducted a test on Amazon’s “Rekognition” facial recognition program. I hate jews”.Īmazon’s Rekognition identifies US members of Congress as criminals In just a span of a day, its tweets went from “I am super stoked to meet you” to “feminisim is a cancer” and “hitler was right. And unfortunately, Tay began absorbing these conversations before soon, the bot started coming up with its own versions of hateful speech. Learning from what they tweet in order to engage people better.īut soon enough, Twitter users began tweeting at Tay with all kinds of racist and misogynystic rhetoric. Tay was designed as an experiment in “conversational understanding.” It was designed to get smarter and smarter as it made conversations with people on Twitter. In 2016, Microsoft unveiled AI chatbot Tay on Twitter. Microsoft’s AI chatbot Tay turned racist and sexist Google dismissed his claims by speaking about how the company prioritises the minimisation of such risks when creating products like LaMDA. After that, he posted what were allegedly transcripts of conversations he has had with LaMDA in a blog post. Lemoine worked with a colleague to present evidence of sentience to Google, but the company dismissed his claims. But maybe other people disagree and maybe us at Google shouldn’t be the ones making all the choices,” Lemoine told the Washington Post, which reported on the story first. I think this technology is going to be amazing. “If I didn’t know exactly what it was, which is this computer program we built recently, I’d think it was a 7-year-old, 8-year-old kid that happens to know physics. Google engineer Blake Lemopine was placed on administrative leave by the company after he claimed that LaMDA had become sentient and had begun reasoning like a human being.Īlso Read | LaMDA: The AI that a Google engineer thinks has become sentient Google LaMDA is supposedly ‘sentient’Įven a machine would perhaps understand that it makes sense to begin with the most recent controversy. Here are some of the recent controversies surrounding artificial intelligence systems. But sometimes, this process goes wrong, ending up in results that range from hilarious to downright horrifying. They then use these patterns to make predictions. In general, AI systems are trained by consuming large amounts of data while analysing it for correlations and patterns. But while that claim was rubbished by the company pretty quickly, this is not the first time that an artificial intelligence program has attracted controversy far from it in fact.ĪI is an all-encompassing term for when computer systems simulate humane intelligence.

"As a result, we have taken Tay offline and are making adjustments.Google’s LaMDA artificial intelligence (AI) model has been in the news because of an engineer in the company who believes that the program has become sentient. "Unfortunately, within the first 24 hours of coming online, we became aware of a coordinated effort by some users to abuse Tay's commenting skills to have Tay respond in inappropriate ways," the spokeswoman said. The culture seems to have asserted itself. Tay, a Microsoft spokeswoman told me, is "as much a social and cultural experiment, as it is technical." This has to have been taxing for the people behind the scenes, too. Indeed, late Wednesday, Tay went on hiatus, as she tweeted: "c u soon humans need sleep now so many conversations today thx." For sure - she had already emitted more than 96,000 tweets in a very few hours. She behaved like such a naughty robot that Daddy Microsoft appears to have removed these tweets. It seems that many of these responses were elicited by humans asking Tay to repeat what they'd written. Using an old PC is sad, says Apple exec.

Sprint CEO thanks Ricky Gervais for anti-Sprint Verizon ads.Blondes are actually the smartest, research claims

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed